SaaSCompanyAddsAI-PoweredSemanticSearchUsingLangChain.jsandQdrant

How a B2B SaaS company added AI-powered semantic search and document Q&A to their product using LangChain.js and Qdrant — embedded into their existing Node.js backend in 6 weeks without a separate ML infrastructure or Python environment.

Key Benefits

How a B2B SaaS company added AI-powered semantic search and document Q&A to their product using LangChain.js and Qdrant — embedded into their existing Node.js backend in 6 weeks without a separate ML infrastructure or Python environment.

- Semantic: Search Added

- Node.js: Native Stack

- 6 Wks: Delivery

The Challenge

A B2B SaaS company's product managed large volumes of user-generated documents and records. Their existing keyword search returned poor results when users searched in natural language or used different terminology from what was in the documents. The product team wanted to add semantic search and a document Q&A feature — but did not have machine learning expertise in-house, did not want to manage a separate Python ML environment alongside their Node.js backend, and needed a fixed-cost, defined-scope engagement rather than an open-ended ML research project.

The Solution

Techseria embedded a LangChain.js and Qdrant semantic search microservice into the company's existing Node.js backend. User documents are automatically indexed into Qdrant on upload. Search queries are embedded and matched semantically against the vector index — returning relevant results regardless of exact keyword match. A document Q&A endpoint using LangChain.js and Azure OpenAI lets users ask questions about their documents and receive sourced answers.\n\nThe microservice is deployed on Azure as a containerised Node.js service, callable from the existing product API. No Python, no separate ML platform, no new vendor dependency beyond Azure OpenAI and Qdrant Cloud. The entire implementation was scoped and delivered in 6 weeks from a 2-week Discovery Workshop.

Impact by the numbers

- 6Weeks

- Full DeliveryDiscovery Workshop to production integration in 6 weeks, including embedding pipeline, search API, and Q&A endpoint.

Results

How a B2B SaaS company added AI-powered semantic search and document Q&A to their product using LangChain.js and Qdrant — embedded into their existing Node.js backend in 6 weeks without a separate ML infrastructure or Python environment.

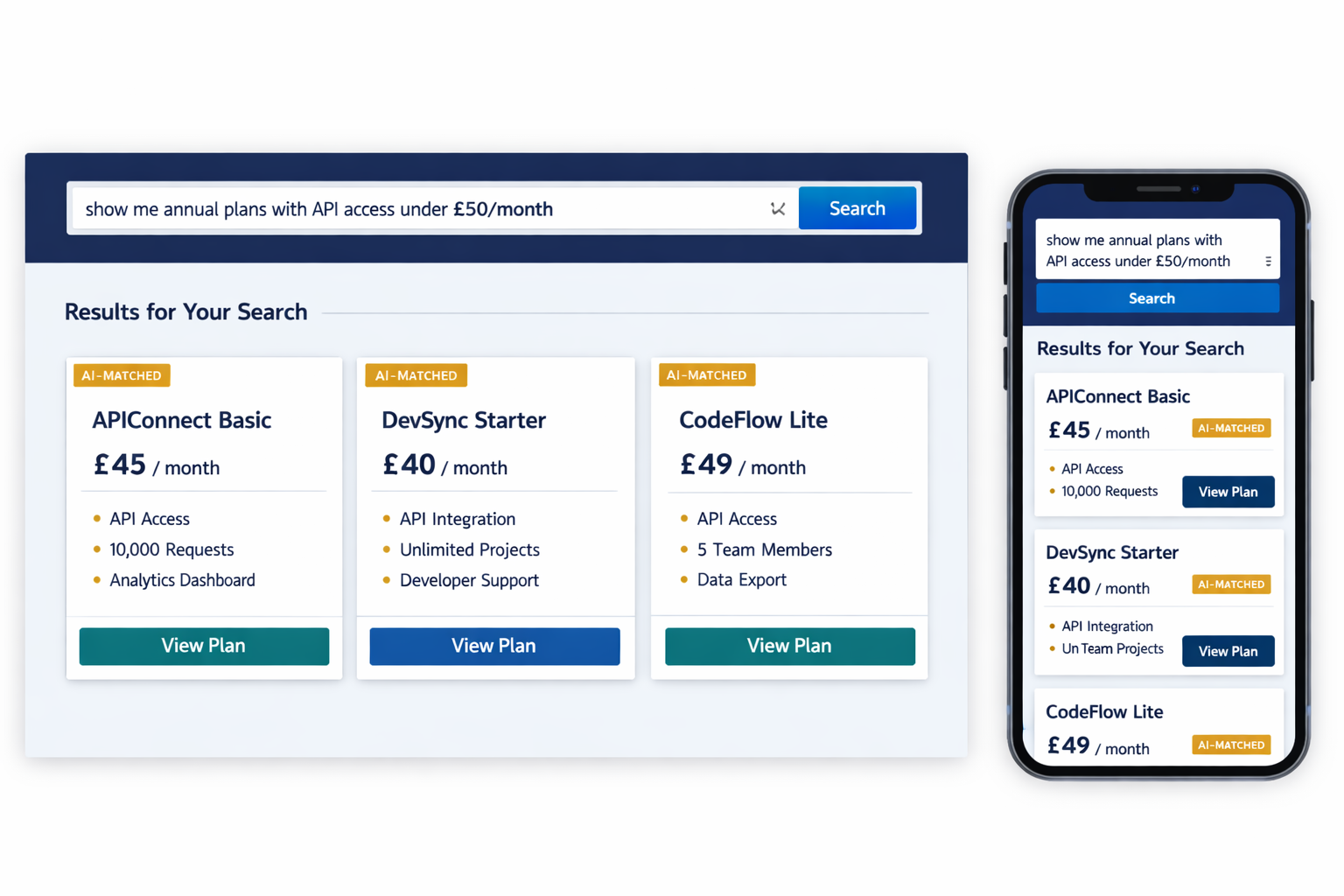

- Search Capability Added: Semantic - Users now find relevant content with natural language queries regardless of exact keyword match to document text.

- Document Q&A Answers: Sourced - Users ask questions about their documents and receive answers with citations to the specific source document.

- Native Stack — No Python Required: Node.js - Embedded into existing Node.js backend as a microservice with no separate Python ML environment.

- Document Indexing on Upload: Auto - New documents indexed into Qdrant automatically on upload — search available immediately without manual processing.

- With User Document Volume: Scales - Qdrant vector index scales with the growth of each customer's document library without architectural changes.

- LangChain.js

- Qdrant Vector Database

- Azure OpenAI Service

- Node.js / TypeScript

- Docker / Azure Container Apps

Technologies Used

Client Voice

"We wanted semantic search in our product but didn't have ML expertise in-house and didn't want to introduce a Python stack into our Node.js codebase. Techseria delivered exactly what we needed: LangChain.js and Qdrant embedded as a microservice — our existing backend calls it like any other API. The document Q&A feature has become one of the most used parts of our product since launch."